Edge AI Vision Pipeline: Five Deep Learning Models on a Single Hardware Accelerator

About the Client

Company’s Request

Technology Set

AI Models | |

YOLOv8s | (object detection) |

SCRFD 2.5G | (face detection) |

ArcFace MobileFaceNet | (face recognition) |

YOLOv8n ReLU6 | (license plate detection) |

LPRNet / fast_plate_ocr | (license plate OCR) |

Hardware | |

Raspberry Pi (CM4/CM5) | |

Hailo-8 NPU (26 TOPS) | |

Hailo-8L NPU (13 TOPS) | |

Languages & Frameworks | |

Python 3.11 | |

OpenCV | |

NumPy | |

FastAPI | |

Pydantic | |

AI & ML Tools | |

Hailo Dataflow Compiler | |

HailoRT | |

ONNX Runtime | |

Norfair (object tracking) | |

fast-plate-ocr | |

Infrastructure | |

Docker | |

FFmpeg · go2rtc (RTSP relay) | |

MQTT | |

SQLite | |

Balena (device management) |

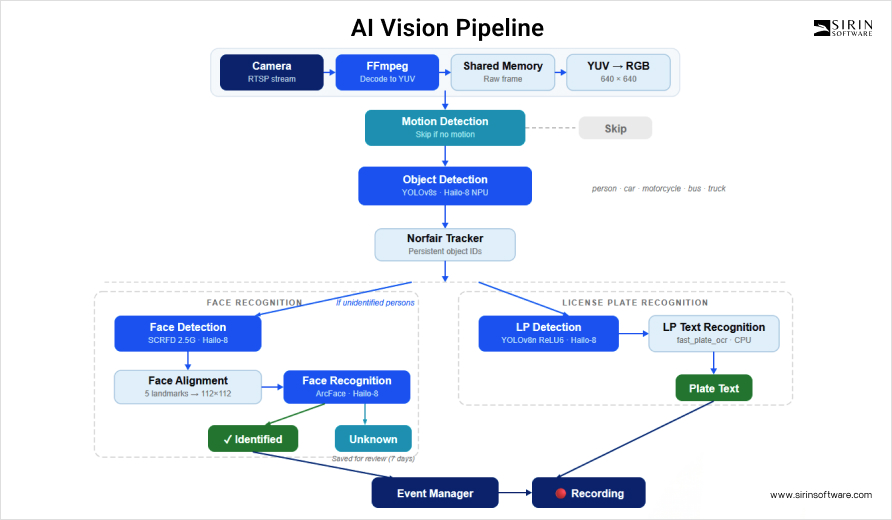

The initial request was to build a real-time AI vision pipeline for their edge hardware platform. The system had to process live camera streams and run five different AI tasks – object detection, face detection, face recognition, license plate detection, and license plate text reading. Everything had to work on a single board with a neural processing unit.

When we joined the project, some early prototype code already existed, and the hardware direction was set – Raspberry Pi with a Hailo accelerator. But the AI pipeline itself did not exist yet. We took on the entire AI software stack – from picking and porting the models, to writing inference and postprocessing code, to figuring out how five models can share one accelerator without starving each other. The multi-camera architecture was also ours to design.

On top of that, there was hardware-level work to do before any of the AI code mattered. The Hailo drivers on the Raspberry Pi did not just install and work, we had to troubleshoot a few issues first. In one case, the Hailo card was not being detected at all, and the fix turned out to be as simple as reseating the physical card. After the drivers were stable, we moved on to the AI pipeline itself.

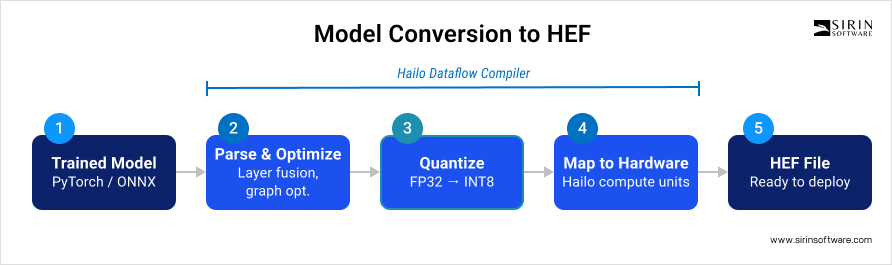

Porting Models to the Hailo Platform

Before any AI work could happen, every model had to be converted to HEF – Hailo Executable Format. This is a compiled binary that the Hailo NPU can run directly. The conversion process starts with a trained model in PyTorch or ONNX format. The Hailo Dataflow Compiler takes the model graph, fuses layers together, and runs optimization passes. After that comes quantization – converting everything from 32-bit floating-point down to 8-bit integers using calibration data. Once that is done, the compiler maps operations onto the Hailo’s compute units and produces a HEF file.

Quantization turned out to be one of the trickiest parts of the whole project. The Hailo hardware only works with integer math, so there is no way around it – every model has to be quantized. We went with post-training quantization using representative calibration datasets. What happens under the hood is that the compiler looks at how values are distributed across your calibration data and then decides on a scale and a zero-point for each layer. Both of these get baked into the HEF file. At runtime, the system pulls them out and uses them to convert raw integer outputs back into real numbers – a step called dequantization. The math is straightforward: take the integer output, subtract the zero-point, multiply by the scale. Skip this step, and your bounding box coordinates and confidence scores come out as garbage.

A less obvious problem was that quantized models produce slightly different score distributions than the original floating-point versions. Confidence thresholds that worked well on the FP32 model did not always work after INT8 conversion. We had to re-tune all thresholds after quantization to get the right balance between catching real detections and filtering out false positives.

Each model was compiled into two HEF variants. One for the Hailo-8 (26 TOPS) and one for the Hailo-8L (13 TOPS). This way, the system can run on different hardware configurations without code changes.

Choosing the Models

We selected five models for the pipeline. Each choice involved trade-offs between accuracy, speed, and how well the model survived quantization.

For general object detection, we picked YOLOv8s. It detects persons, cars, motorcycles, buses, and trucks. Five classes out of the 80 in the COCO dataset. We chose the “small” variant over the “nano” because it gives better accuracy across multiple classes. Out of 80 COCO classes, only these five are relevant for surveillance, so we filter everything else out during postprocessing.

For face detection, we went with SCRFD 2.5G. We tested other options – RetinaFace and MTCNN, but SCRFD stood out because its architecture puts more computation into the most informative parts of the network. It achieves high accuracy with only 2.5 GFLOPs, which is important when you share one accelerator between five models.

Face recognition uses ArcFace with a MobileFaceNet backbone. The standard ArcFace model uses ResNet, which is too large for the Hailo’s memory. MobileFaceNet achieves about 99% accuracy on the LFW benchmark but with far fewer parameters. This made the difference between the model fitting on the device or not.

For license plate detection, we used a YOLOv8n model – the nano variant. Since this is a single-class task (just “license plate”), the smaller model is enough. It runs roughly four times faster than YOLOv8s. One deliberate architecture choice we made was using ReLU6 activation instead of the standard SiLU. ReLU6 clamps output values to the range of zero to six. A tighter dynamic range means less information is lost when the model is quantized to INT8. We made this architecture choice specifically with quantization in mind, not as a random swap.

License plate text recognition has two paths. The primary one uses an ONNX-based OCR library called fast_plate_ocr, running on the CPU. The alternative uses LPRNet compiled to HEF and running on the Hailo device. We kept the CPU path as the default because it frees the Hailo for the more compute-heavy detection models, and it supports multiple plate formats – European, global, and Argentinian.

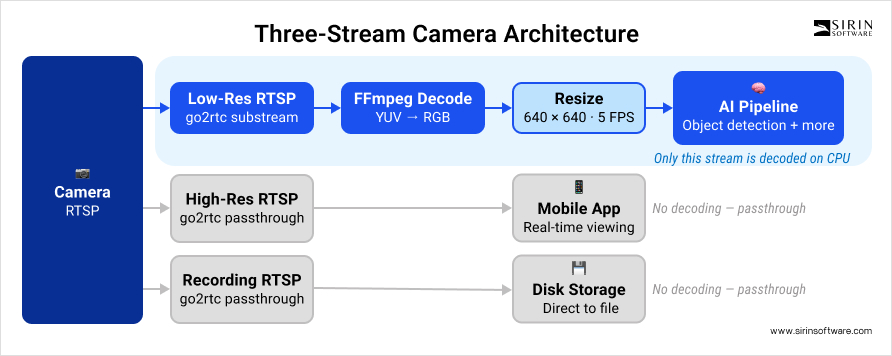

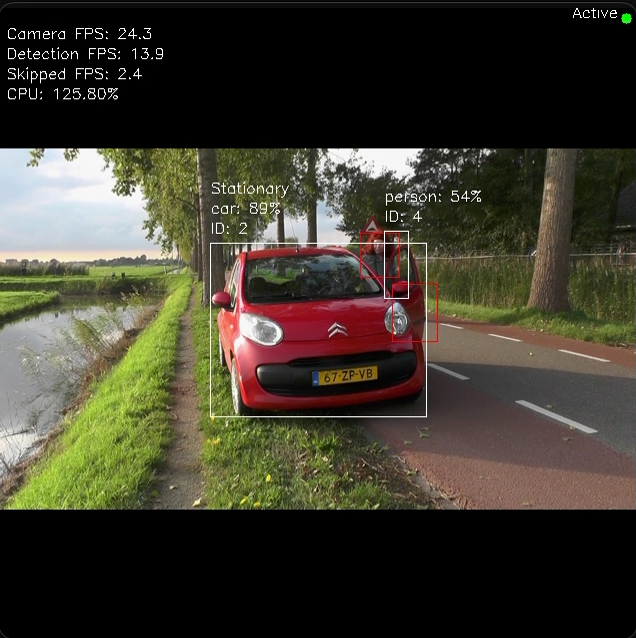

Object Detection and Tracking

The first stage of the pipeline detects people and vehicles. Each camera is configured with three separate RTSP streams for a different purpose. The low-resolution stream is decoded live and used for AI detection. For real-time viewing on mobile apps, a high-resolution stream is passed through without decoding. Recording works the same way – a third stream goes directly to disk, also without being decoded. This is what keeps the CPU load low. Only the small detection frames actually get decoded. Everything else – the mobile app stream, the recording – passes through at full resolution without the CPU touching it.

FFmpeg reads the low-resolution stream and writes raw YUV frames straight into shared memory. From there, the pipeline converts each frame to RGB and resizes it down to 640×640 for the YOLOv8s model. After inference on the Hailo device, the system filters results to keep only the five relevant classes and drops anything below the 0.5 confidence threshold.

To save compute, we added motion gating. Before running object detection, the system checks if there is any motion in the frame. If nothing is moving, object detection is skipped entirely. For cameras watching quiet scenes like a driveway at night or an empty hallway, this saves a large amount of processing time on the accelerator.

After detection, the Norfair tracker takes over. It assigns persistent IDs to objects across frames using IOU-based matching, so the system knows “this is the same car as 2 seconds ago.” If an object stays in the same spot for more than 30 frames, the tracker marks it as stationary – this is how we distinguish parked cars from moving vehicles and avoid recording events where nothing is actually happening.

Operators can also set up polygonal masks to exclude specific areas of the image from detection. For example, in a real deployment config we used, one camera had a mask on the top portion of the frame to ignore motion from a timestamp overlay.

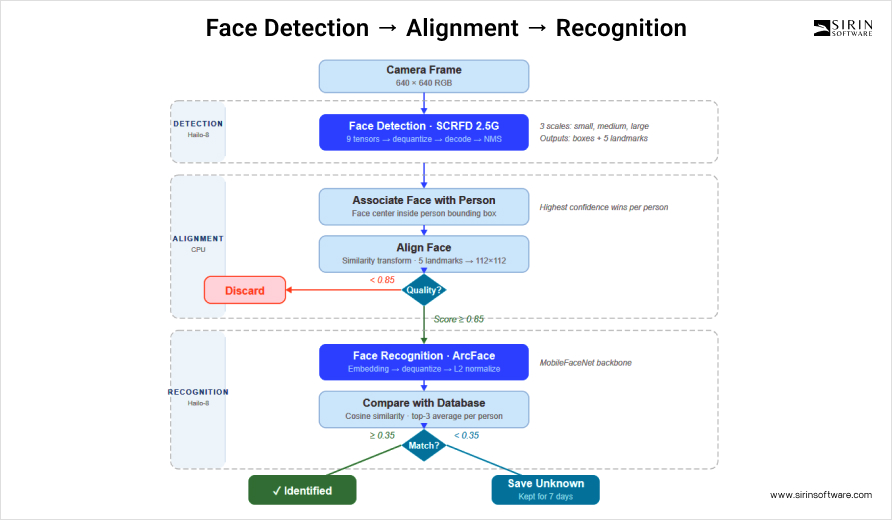

Face Detection and Recognition

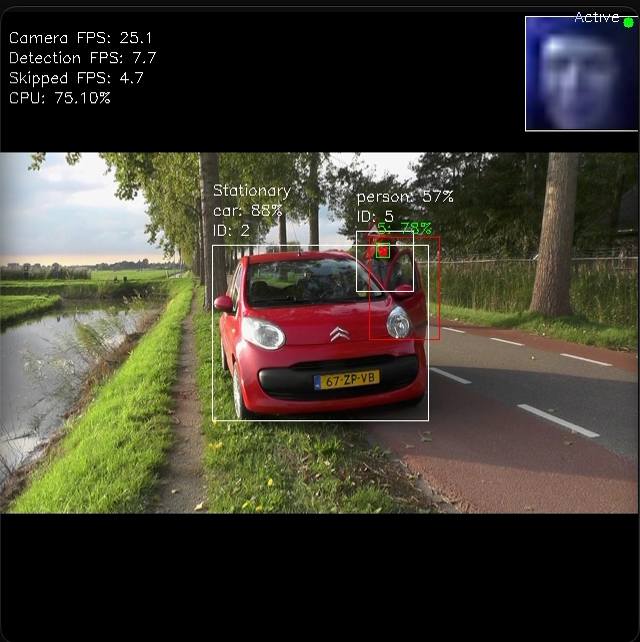

Face detection only runs when the system sees unidentified persons. If all tracked people already have a name assigned, the face detection model is not triggered. This selective approach saves accelerator time for other tasks.

When face detection does run, the SCRFD model processes the 640×640 frame and outputs nine quantized tensors – three per scale, covering small, medium, and large faces. Each tensor gets dequantized using its own scale and zero-point values read from the HEF metadata. After dequantization, the system decodes bounding boxes and five-point facial landmarks (eyes, nose, mouth corners) using anchor grids at three different scales.

Getting the face landmark decoding right was not straightforward. Our engineers noticed that the target landmark coordinates in our implementation did not match the reference example exactly. We had to update the scales and adjust how landmarks were decoded for different face sizes before the alignment worked correctly.

After detection, the system associates each face with a person bounding box from the object detection stage. It checks which person box contains the center point of the detected face. If multiple faces compete for the same person, the one with the highest confidence wins. Faces that overlap with exclusion masks are skipped.

Each matched face is then aligned into a normalized 112×112 crop. The five facial landmarks drive a similarity transform that straightens and centers the face. A quality score between zero and one evaluates the alignment based on rotation, centering, size, and symmetry. Only faces with a quality score above 0.85 are saved for later review. Anything below that is too poor to be useful.

Once aligned, the face crop is fed into the ArcFace MobileFaceNet model. What comes out is an embedding vector – think of it as a numerical fingerprint of that face. The raw integer output gets dequantized first, then L2-normalized to unit length, which is necessary for the next step: cosine similarity comparison. The system checks this embedding against every stored embedding in the database. Rather than relying on a single best match, it takes the top three most similar embeddings for each known person and averages them. If that average score lands above 0.35, the person is identified.

Why 0.35? That is lower than what most face recognition systems use. The reason is quantization drift – the INT8 model produces slightly different embeddings than the FP32 original. The top-3 averaging strategy compensates for occasional poor embeddings from suboptimal angles or lighting.

At startup, the system processes all images in the known faces directory – detecting faces, aligning them, generating embeddings, and storing everything in an SQLite database. Unknown faces that pass the quality threshold are automatically saved to a separate directory. An operator can review them and move selected images into the known faces folder to teach the system new people. No retraining is needed – the system generates new embeddings on the next restart. These unknown face images are kept for seven days by default, after which they are cleaned up. An operator can review them during this period and move selected images into the known faces folder to teach the system new people. No retraining is needed – the system generates new embeddings on the next restart.

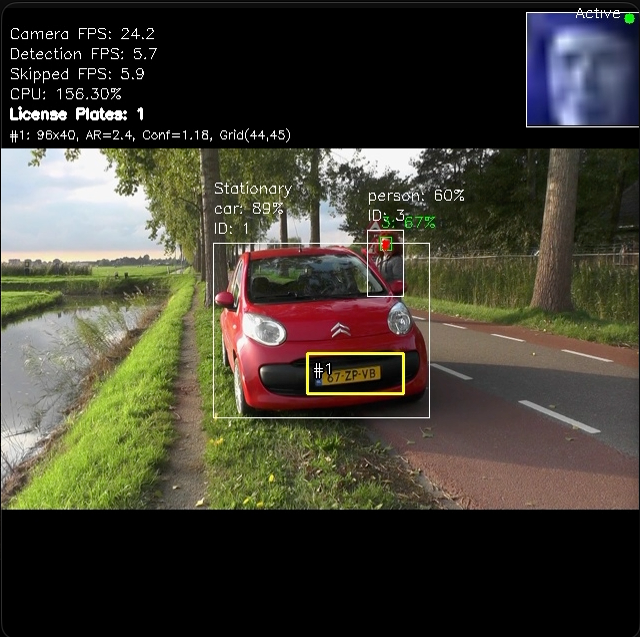

License Plate Detection and OCR

License plate detection uses its own dedicated model – YOLOv8n with ReLU6 activation. We used a separate model instead of trying to add license plates to the general object detector because plates have a very different aspect ratio and scale than people or cars. A dedicated single-class model runs faster and can be tuned specifically for plate geometry.

The model outputs six tensors – three classification heads and three regression heads – at strides of 8, 16, and 32. Each tensor is dequantized using per-layer scale and zero-point values. The postprocessing code applies stride-specific bounding box dimensions that we tuned empirically for European license plate proportions. At stride 8 (fine scale), the width-to-height ratio is 12:5. At stride 16, it shifts to 10:3.5. At stride 32 (coarse scale), it becomes 8:2.5. These values reflect the fact that license plates typically have a width-to-height ratio between 3:1 and 5:1. These values reflect the fact that license plates typically have a width-to-height ratio between 3:1 and 5:1.

After non-maximum suppression with an IOU threshold of 0.4, the system crops detected plate regions from the original-resolution frame. We then run histogram equalization on each crop to boost contrast – without this, the text recognizer struggles in low light or when part of the plate is in shadow.

For the actual OCR, we use the fast_plate_ocr library with a European plate model. It runs on the CPU, not on the Hailo. This is a deliberate choice – running OCR on the CPU keeps the Hailo accelerator free for detection models that need more compute power. The library returns recognized characters along with per-character confidence scores, which the system uses to assess reading quality.

Getting license plate recognition to work well on the Hailo required several attempts. We first tried EasyOCR, then converted a license plate detection model to ONNX format and attempted to convert it to HEF using the Hailo Dataflow Compiler and Model Zoo.

Our engineers also retrained the LPRNet model on a new dataset and tried converting the fast_plate_ocr ONNX model to HEF. In the end, the CPU-based OCR path gave the most reliable results, and the Hailo-based LPRNet was kept as an alternative option.

Sharing One Accelerator Between Five Models

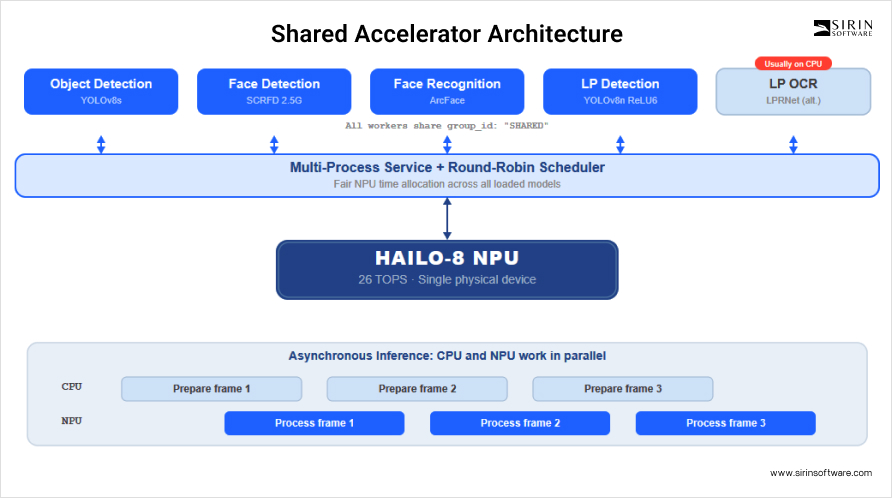

One of the biggest engineering challenges was running five AI models on a single Hailo-8 device without any of them blocking the others. Each model runs in its own inference worker process, and all of them compete for time on the same physical chip.

We solved this using three mechanisms in the Hailo runtime. First, round-robin scheduling distributes inference time fairly across all loaded models. Second, the multi-process service acts as a broker that manages access from multiple worker processes to the single device. Third, all workers register under the same shared group ID so the scheduler can coordinate them.

Each inference worker wraps the Hailo API in an asynchronous pattern. While the Hailo processes one frame, the CPU prepares the next one – converting color spaces, resizing, and handling queue logic. This overlap between CPU and NPU work keeps both busy and improves throughput.

Memory Architecture and Performance

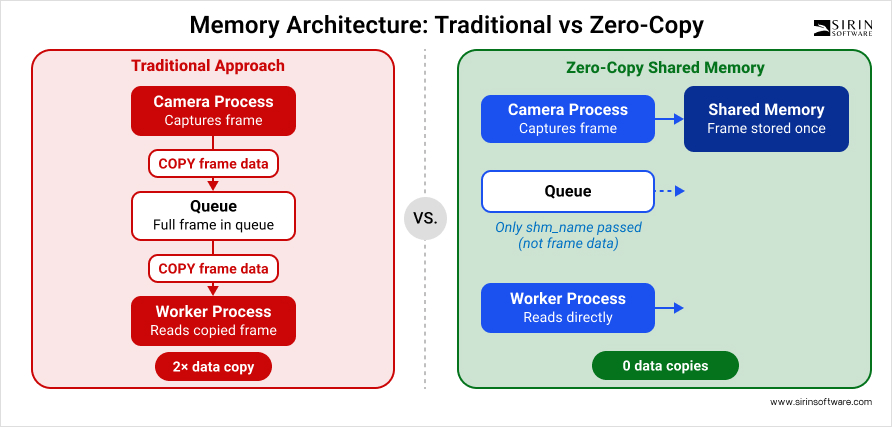

Frames from cameras are never copied through inter-process queues. Instead, the camera capture process writes raw YUV frames directly into shared memory. When a frame needs to be processed, only the shared memory name is passed through the queue – not the data itself. Inference workers read the frame data directly from shared memory by name. This zero-copy approach is critical when handling multiple cameras at 5 FPS each, because copying full frames between processes would create a major bottleneck.

Each inference worker also pre-allocates its output arrays at startup instead of creating new ones for every frame. This avoids the cost of repeated memory allocation during real-time inference.

The queue design adds another layer of efficiency. Every camera has dedicated input and output queues for each inference stage, and each queue is limited to a single pending item. If the pipeline falls behind the camera’s frame rate, old frames are automatically dropped instead of piling up in memory. This creates natural back-pressure – the system always processes the most recent frame rather than working through a growing backlog.

Event Management and Recording

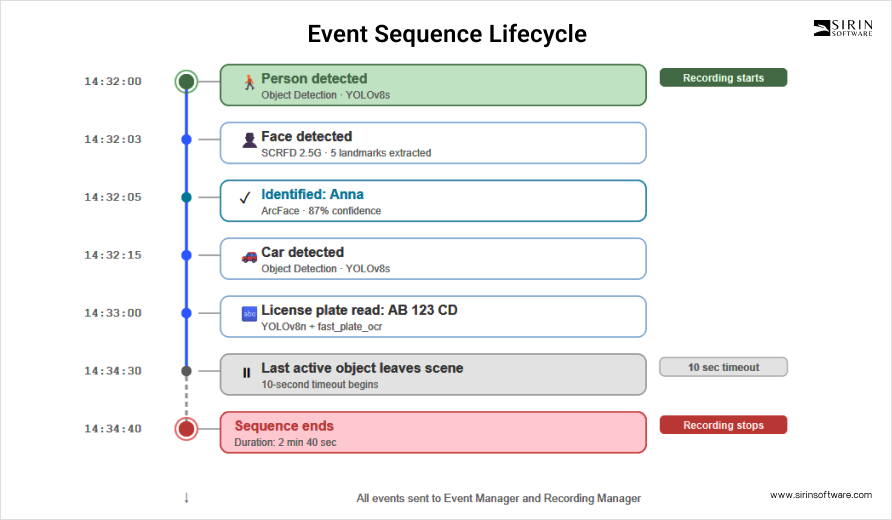

The system groups detections into meaningful events. When the pipeline sees active (moving) objects in a scene, it starts a new event sequence. Each sequence gets a unique ID, and every detection that happens during this sequence – a new person, a recognized face, or a vehicle- is logged as part of that sequence. When no active objects are visible for more than ten seconds, the sequence ends.

This approach prevents the system from generating thousands of disconnected detection alerts. Instead, it produces structured event records: “a person arrived at 14:32, was identified as Anna at 14:33, and the sequence ended at 14:45.” Each event is timestamped and sent through an internal event queue to both the recording manager and an event manager.

Recording is tied to event sequences. When a new sequence starts, the recording manager begins saving the high-resolution stream to disk. When the sequence ends, recording stops. This event-driven approach means the system only records when something is actually happening, which saves significant disk space compared to continuous recording. The system also monitors disk usage and enforces a configurable limit – typically 80 to 90 percent of available space. Recordings older than a set retention period are automatically cleaned up.

Beyond local processing, the system can also send event frames to an external cloud-based LLM for scene description. When this feature is enabled, the pipeline captures three frames at one-second intervals during an active event and sends them over MQTT along with metadata – how many people and vehicles were detected, and which persons were identified. The cloud LLM then generates a natural language description of what is happening in the scene. This feature is optional and can be enabled per camera or globally.

Configuration and Deployment

The system is fully configurable through YAML files. Each camera is defined with its RTSP stream URLs, detection resolution, frame rate, and optional zone masks. AI features – face detection, face recognition, license plate detection – can be toggled on or off independently. Cameras can be marked as indoor or outdoor, which affects whether certain features like the cloud LLM integration are activated. A real deployment configuration from testing included five cameras at different resolutions (704×576, 736×416), with both indoor and outdoor setups, zone masks for ignoring motion in specific areas, and all AI features enabled.

The entire system runs inside Docker on the Raspberry Pi. The Hailo runtime (HailoRT 4.20.0) is installed as an ARM64 package. FFmpeg handles stream capture, go2rtc acts as an RTSP relay for re-streaming to mobile apps, and MQTT provides communication with external systems like alarm panels. For managing device deployments at scale, the system supports Balena – a platform for deploying and updating software on fleets of embedded devices.

Part of our work also involved supporting the newer Raspberry Pi CM5 board alongside the original CM4. Getting Balena to work on the CM5 with Hailo support required coordination with Balena’s own development team, since Hailo driver integration was not yet part of their official CM5 support at the time. Our engineers tracked this effort and contributed to making sure the Hailo NPU worked correctly on both board variants.

Beyond the AI pipeline, our team also worked on peripheral hardware integration for the Helma board. This included getting a Zigbee chip to communicate correctly – which initially failed due to a small mistake in the device tree configuration – and setting up PWM-controlled cooling fans for both the CPU and the NPU. These are small tasks on paper, but they are the kind of work that has to be done right for a product to run reliably in real deployments.