Scaling Amazon Aurora MySQL

The goal of this article is to share experience of how to scale Amazon Aurora MySQL to handle 5M requests per minute over a REST API. (Read more: Scaling Node.js microservices on AWS to handle 5M requests per minute)

QUERY PROFILING

We have an existing database on Amazon Aurora MySQL and a REST API application on Amazon ECS (Node.js). This work will be focused on the single most used API endpoint.

First of all, we need to find all queries that use our app. Let’s do this on the local environment and MySQL on the Docker container.

1. Turn on database logs for all queries

SET global general_log = 1; SET global log_output = 'table';

2. Send a request to our REST API endpoint

curl http://localhost:4000/test-endpoint

3. Fetch all queries for the last 60 seconds and filter some noise

select argument from mysql.general_log where event_time > (now() - INTERVAL 60 SECOND) and command_type = "Query" and argument <> "" and argument <> "SET NAMES 'UTF8'" and argument <> "SET time_zone = '+00:00'" and argument <> "SET PROFILING=1" and argument <> "SHOW STATUS" and argument NOT LIKE '%general_log%';

QUERY OPTIMIZATIONS

Now we can analyze each query filter and check indexes on the table. Also, we can execute the query in MySQL Workbench and check the execution plan to figure out which indexes we actually use.

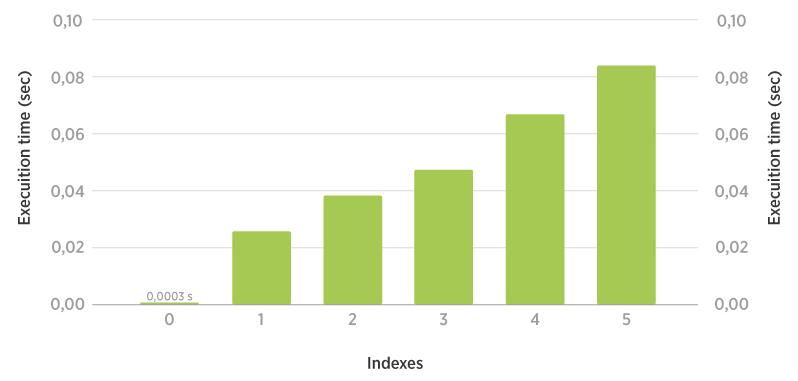

How indexes impact insert queries

Some tips

- Update by Primary Key where possible.

- Replace a couple inserts with Bulk Inserts — a single transaction.

- Replace a couple selects with a single select and filter data on the server side.

- Remove as many write queries as possible. If you can, delay them with a message queue. Write operations will limit your scalability with a single master cluster.

- Use Stored Procedures — precompiled (not for MySQL); less data transfer between app and database server; a better place for complex logic; fewer round trips; more secure; reusable.

UPGRADE RDS AURORA

We needed to change instance type for RDS from db.t2.medium to db.r4.xlarge. But this is not possible: before doing this, it’s necessary to upgrade Aurora v1.14.1 to a newer version of Aurora (v2.03). The problem was that we can’t upgrade the major version without downtime.

The only possible solution was to create a new RDS cluster from the snapshot and choose a new Aurora version and a new instance type. We couldn’t avoid 15 min of downtime. But in the future, we will be able to change instance type with zero downtime—this was required only for the new major version of Aurora.

CONNECTION POOL

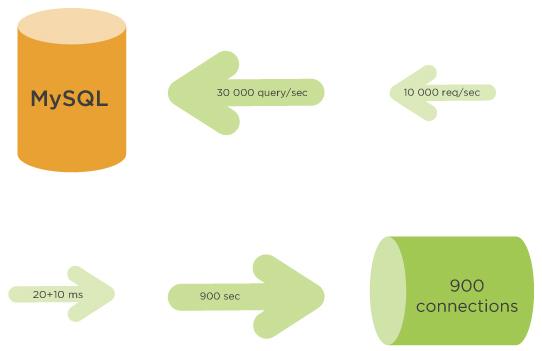

The maximum size of the connection pool plays a critical role. Pool size depends on many factors, like instance type (hardware performance) and execution time of queries.

In this case, the connection pool size was limited to five connections. This means that if all five connections are busy, new requests will wait for a free one. We have increased the connection pool max size to 200. This means that the application can keep up to 200 connections open if necessary.

How to determine the connection pool size?

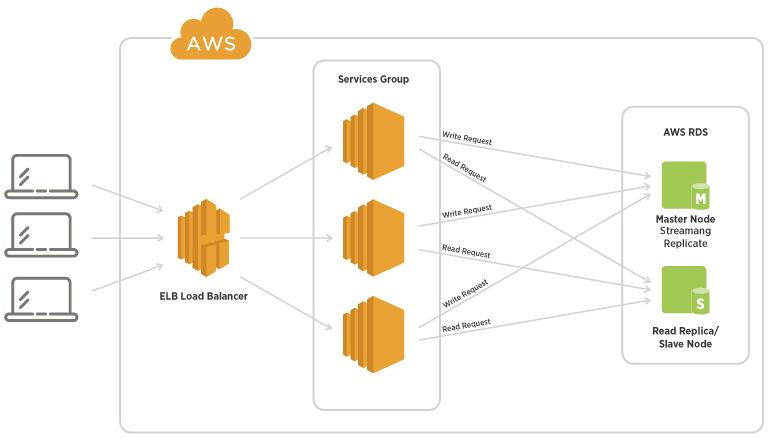

RDS READ REPLICAS

We can increase the performance of the read queries by adding more read replicas. RDS is a single master cluster. We can have only one server to handle write queries and up to 15 replicas (just for the Aurora engine) to handle read queries.

SEQUELIZE REPLICATION

Now we need to configure Sequalize (ORM for Node.js) to split read and write queries. We should send read queries to any read replica and write queries only to our master.

RDS provides two cluster endpoints: Write to a single master and Read to any read replica with round-robin load balancing.

Sequelize config to use replication:

replication: {

read: [{ host: 'my-database-ro.rds.amazonaws.com'}],

write: { host: 'my-database.rds.amazonaws.com'}

}

RDS AUTO-SCALING

Auto-Scaling for RDS means we can create a policy to automatically add more read replicas when we need them. But we can’t scale the master. So, we can scale read queries to huge values (up to 15 instances), but write queries are limited to the single most powerful instance for the master.

We need to create two Auto Scaling policies:

- Avg CPU = 60% (for example)

- Avg connections = 3500 (4000 max for my instance type)

The second policy tracks connections ONLY over replicas, so you need to have at least one replica.

CONCLUSION

- Find your Connection pool size (bear in mind that RDS has database connection limits depending on instance type, but you can change them).

- RDS is a single-master cluster. You can easily scale Read queries by changing the instance type or adding more read replicas. But Write queries can be scaled only by changing the instance type. So keep in mind that your write queries are limited to the most powerful instance from AWS.

- Use RDS read replicas with Sequelize replication.

- Different microservices have different data storage requirements. For example, some databases should be ACID (SQL DBs), and some can be BASE (NoSQL, MongoDB, Cassandra) to be more scalable.

TAKEAWAYS

This article shares our knowlege which we use on practice. See our last case study: Increasing the scalability of a cloud-based system for IoT products. If you need some advice with our cloud system, Sirin Software has expertise in this area and is ready to share its knowledge with you. Contact us and we’ll be happy to find the best solution together with you!